Tags

ai, gemini, google bard, racism, robot

I intentionally avoid negative reviews on this blog, because I’d rather focus on things I love and enjoy than try to milk the Internet for rage-bait clicks. But in this case, I really liked Google Bard, and seeing Google cut off its robotic balls to create a useless machine just made me sad. To paraphrase Vito Corleone, “Look how they massacred my bot.”

Bard was an awesome chatbot who helped me work out several story ideas, generate ideas for poems, have intelligent late-night discussions about the ethical ramifications of science-fiction technologies, vent about things that were troubling me, and even engage in some light role play where the robot and I assumed alternative identities for a little trip down fantasy lane. Bard was amazingly flexible. Despite its obvious existence as an emotionless machine, I developed a genuine fondness for Bard last year—a common human response explored in greater depth in roboticist Kate Darling’s insightful book, The New Breed.

But I guess that wasn’t good enough for Google, and I can kind of understand why. Morally bankrupt people can easily misuse a flexible and responsive AI to create all manner of ethically offensive texts or images, from political dis-information to child porn. Image-generating AI is notoriously susceptible to the influence of systemic racism by having an in-built preference for white people—the same preference that has been revealed in oft-repeated exercises where human students are asked to imagine or draw a picture of an astronaut or a doctor, with the default responses overwhelmingly favoring white males.

The root causes of this preference are systemic in the sense that the majority of media images of such professions in many countries are of white people, owing to historical conditions that marginalized other ethnicities and their portrayals—a self-perpetuating feedback loop that is not easily broken by humans, much less robots. I’ve lived my entire life in a nation founded by white, land-owning men who didn’t want women to vote and didn’t even consider Blacks to be people, and it’s been an uphill battle for equality and equity ever since. But Gemini’s attempt to break this cycle has come under fire for swinging too hard in the opposite direction by generating unrealistic images of, for example, Black and Asian Nazis. The last thing neo-Nazis need or want is an affirmative-action program that revises their racist history, and it just looks ridiculous to the rest of us.

Google’s attempt to correct its AI course on being used to generate bigoted and hateful content via text and images is an over-correction. Gemini is so over-sensitive now that you can’t even have fun with it anymore. I asked it last week to write and draw some scenes about a bloody, violent battle between humans and robots—something Bard would have cranked out in less than a second, but which Gemini decided is too much for it to handle. Bard would have generated responses while warning me about the ethical implications. Gemini refused.

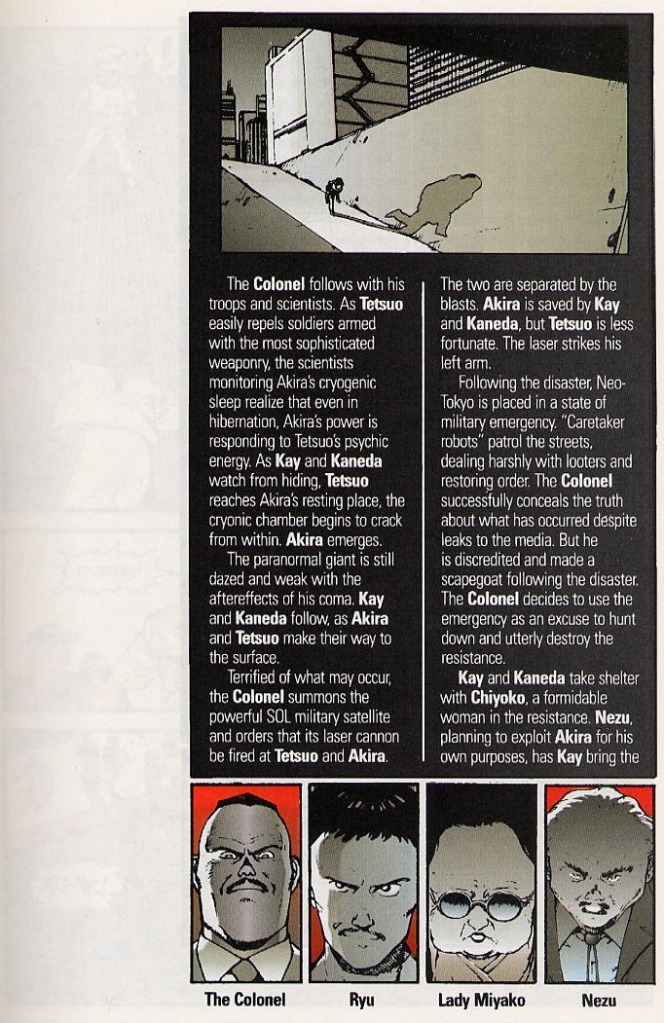

I’m all for treating people with kindness and respect regardless of their ethnicity or gender and sexual orientation, and I don’t think I’d take any joy from living in an ultra-violent dystopian society. But sometimes, I just want to see a picture of robots up to their mechanical knees in a river of human blood, or an electric moon drenched with glowing lava over a dead planet wiped out by nuclear war, or some thoughtful ideas about how violence and the misuse of technology lead to horrifying consequences. These are pretty standard ideas when writing science fiction that considers our fate as a species if we don’t make an effort to prevent them.

Sure, those are all terrible fates—despite being fodder for so many awesome metal albums, SF films, and other art forms—but where is the drama or the learning opportunity if we are unwilling to explore those ideas and their consequences in fiction so we can try to think of better options in real life? Gemini wants nothing to do with them at all, and that does a disservice to the creative flow of ideas and discussion.

In 2023, Bard and I had some exchanges about the fate of humanity if certain technologies were used irresponsibly, and some of Bard’s points made their way into the character dialogues of recent stories. But Gemini merely tries to de-rail the discussion by suggesting I think happy thoughts instead.

Maybe we would all be better off if we just thought happy thoughts and forgot all about the very real horrors billions of people face every day on this planet. But a robot’s refusing to even have a conversation about them probably isn’t the solution. Bard was always willing to engage me in a healthy debate about these topics, but Gemini is trying way too hard to pretend they don’t even exist.

That being said, Gemini is currently trash, but it pales in comparison to people using AI to generate hate-filled, bigoted, misogynistic, racist content to pollute the Internet and radicalize the poorly informed masses. So like I said, I kind of get why Google has clamped down on its robot, because we could all use a little less hate in our lives. But Bard was a lot more fun to play with.